Master Context Engineering with Google ADK: Transform Your Multi-Agent Systems

Reduce AI Operational Costs by 70% While Building Scalable Multi-Agent Workflows

Introduction

Organizations struggle with balancing AI system performance and cost efficiency. Context engineering presents a strategic solution—it’s not about cramming more data into prompts, but about architecting intelligent data management systems.

Google’s recent breakthrough in context engineering reveals a fundamental shift: treat context as a compiled view over structured systems rather than a string buffer. This approach transforms how businesses build scalable AI applications.

We’ll explore practical context engineering implementation using Google’s ADK framework. You’ll discover how session state, artifacts, and memory create efficient multi-agent workflows that reduce costs while improving performance.

Before starting, ensure you’ve installed ADK (pip install google-adk) and secured your Gemini API key. Complete implementation code follows throughout this guide.

Understanding Context Engineering Challenges

Modern AI development faces critical constraints that traditional approaches can’t address. Simply expanding context windows creates more problems than solutions.

Here’s what enterprises encounter when scaling AI systems:

Cost Escalation — Larger contexts mean exponentially higher operational costs. Processing 100K tokens costs significantly more than 10K tokens, and response times increase proportionally. Your monthly AI budget spirals quickly.

Information Degradation — Models struggle with information prioritization in oversized contexts. When you flood prompts with outdated logs, irrelevant tool outputs, and deprecated system states, performance degrades. Research confirms models perform poorly on information buried within lengthy contexts.

Scalability Barriers — Even million-token contexts have limits. Production workloads incorporating RAG results, conversation histories, and tool outputs exceed capacity rapidly. Growth becomes unsustainable.

The solution isn’t larger contexts—it’s smarter context engineering. Organizations need systematic approaches to context representation and management.

Context Engineering as Compiled Architecture

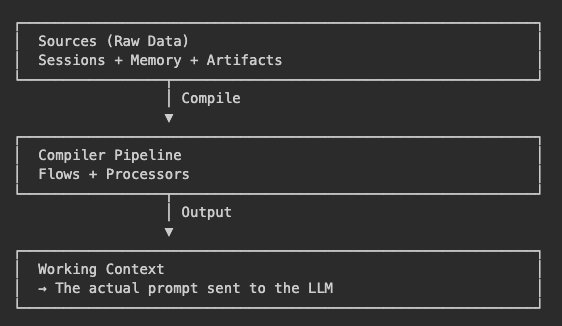

Google’s context engineering methodology revolutionizes how we think about AI system architecture. Instead of treating context as raw text manipulation, we’re building intelligent compilation systems.

Think of context engineering like software compilation. Raw data serves as source code, processing pipelines handle transformation, and working context delivers optimized output for specific AI model invocations.

This paradigm transforms context engineering from manual prompt crafting into systematic engineering discipline.

Core Context Engineering Principles

Successful context engineering implementation relies on three foundational principles that guide enterprise-grade AI system development:

Storage-Presentation Separation — Sessions maintain persistent data storage while working contexts provide temporary views. These components evolve independently, allowing prompt formatting changes without storage schema migrations. This separation enables flexible system maintenance.

Explicit Processing Pipelines — Context compilation uses named, ordered processors rather than string concatenation. This approach makes transformations observable, testable, and maintainable. Development teams can debug and optimize each processing step.

Minimal Context Scoping — Model invocations receive only essential context. Agents access additional information through explicit tool calls rather than context flooding. This strategy optimizes performance while reducing costs.

Check out the live demo to understand deeply about this:

Four-Layer Context Engineering Architecture

The ADK framework structures context engineering through four distinct architectural layers, each serving specific operational purposes: